Developing Software with Agents

Table of Contents

- Overview

- Mission: Impossible

- Mission: Possible

- Milestone 1 — Project Scaffolding

- Milestone 2 — Calculator Engine (engine.rs)

- Milestone 3 — UI (app.rs + main.rs)

- Milestone 4 — Polish

- Planception

- 1. 🟡 Integration test access (structural issue)

- 2. 🟡 Missing decimal_point() branch

- 3. 🟡 Backspace on negative numbers

- 4. 🟢 percent() with pending operation ambiguity

- Context Control

- The Grind

- Pro Tips

- Test-Driven-Development and Behavior-Driven-Development

- BDD-Style Tests (Given/When/Then)

- Debtmap

- Clippy

- The Calculator

- Conclusion

Overview

In my previous post on using AI to write software, I was of the opinion that we should manually write interfaces and allow an agent to fill in the details. This would give the programmer more control over how an application gets structured instead of letting the agent run wild.

My opinion has now changed over the last 3 months. I've stopped writing code by hand completely. Instead I write a few lines (maybe) of example code and give a quick skim over the generated code. I'll explain the techniques I'm using that make this work.

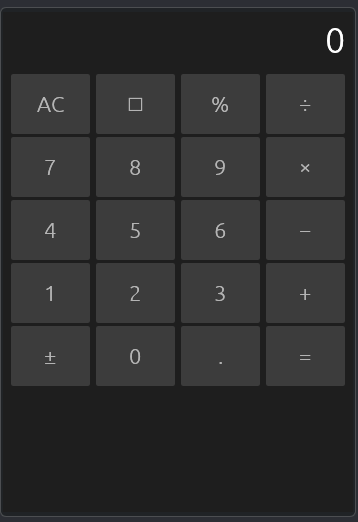

In this post we will be creating a compiled desktop application in the form of a... calculator! It needs to fit in a long blog post, so it's intentionally small and will only have basic features. Nothing too fancy.

I'll be interleaving my thoughts, explanatory things, and snippets from the interactive session with the agent. The snippets will use colored blocks so you can easily identify messages coming and going.

Mission: Impossible

The first thing we need is a plan. And not just any plan, but one that accurately captures what we actually want. Let's try a naive prompt first:

[User]

Propose a plan to create a calculator app.

My current LLM of choice responded with this (and a complete opinionated plan on everything else too):

[Reasoning]

The user wants me to propose a plan to create a calculator app. This is a straightforward planning request - no need to load any skills or do anything fancy. Let me provide a clear, structured plan.

[Response]

Build a web-based calculator app with a clean UI, standard arithmetic operations, and keyboard support. The project will be simple, dependency-free (vanilla HTML/CSS/JS), and easy to extend.

...(wall of text with every other detail hallucinated by the model)...

Would you like me to go ahead and build this? I can create all three files and have a working calculator ready in moments.

Yeah, no. A web browser is a big dependency so we are already in hallucination land. Anyway, I know that I want a compiled desktop application, not a web app. We could start the plan by saying "use Rust", but that doesn't generalize very well. The agent will still make all the other choices, and it might even end up with a web app using something like Tauri.

Continuing the session:

[User]

But I want it in Rust.

[Response]

Rust Calculator App — Revised Plan

- Overview

Build a GUI calculator app in Rust using the iced framework — a native, cross-platform, Elm-inspired GUI library. Clean architecture, fast compilation, and a responsive layout.

...(wall of text with every other detail hallucinated by the model)...

Shall I go ahead and build it? I'll generate the full project with Cargo.toml and all source files, ready to run with cargo run.

So this sort-of works. However, the wall of text contains a bunch of assumptions and things that I either don't want or didn't get to choose. We need to play whack-a-mole in order to eventually get to a point where the plan seems reasonable.

Mission: Possible

Instead of spamming the agent going back-and-forth trying to nail down all the things that we want, let's instead tell it to ask us questions about it in a new session:

[User]

Propose a plan to create a calculator app. Please ask clarifying questions for any ambiguous details.

For the most part, models are trained on basically the entire internet and every book ever written (more or less), so it should be able to figure out what needs to be clarified before implementing. Here is the response:

[Reasoning]

The user wants me to propose a plan for creating a calculator app. Before jumping into a plan, I should ask clarifying questions about the ambiguous details. Let me think about what I need to know:

- Platform/target (web, mobile, desktop, CLI?)

- Features (basic, scientific, programmer, graphing?)

- Tech stack preferences

- UI/UX design preferences

- Target audience

- Any specific requirements

[Response]

Before I propose a detailed plan, I'd like to nail down a few things:

- Platform

- Web app, mobile app (iOS/Android), desktop app, or CLI?

- If web: is this a single-page app, or should it also work offline (PWA)?

- Feature Scope

What type(s) of calculator functionality do you need?

- Basic — add, subtract, multiply, divide, percent

- Scientific — trig, logs, exponents, roots, constants (π, e)

- Programmer — binary/octal/hex conversion, bitwise ops

- Graphing — plot functions on a coordinate plane

- Unit/currency conversion — length, weight, temp, live exchange rates?

- History — recall past calculations?

- Tech Stack Preferences

- Do you have a preferred language/framework (e.g., React, Vue, Svelte, plain HTML/CSS/JS, Swift, Kotlin, Python/Tkinter)?

- Any styling preferences (Tailwind, Material UI, custom CSS)?

- Design & UX

- Should it mimic a classic physical calculator layout, or have a modern/minimal design?

- Light mode, dark mode, or both (theme toggle)?

- Any accessibility requirements (keyboard nav, screen reader support)?

- Non-Functional Requirements

- Does it need tests (unit, integration, e2e)?

- Is there a target deployment (GitHub Pages, App Store, internal tool)?

- Any performance or browser/device constraints?

- Is this a learning project, a portfolio piece, or production software?

- Timeline & Scope

- Are you looking for an MVP first (iterate later), or a full-featured deliverable?

- Any hard deadlines?

Once you answer these (even partially — "I don't care, you decide" is fine for any of them), I'll draft a concrete plan with milestones, file structure, and implementation order.

Now this is something we can work with! It asks questions about the design and we can choose from some options, or add our own options, or ignore some. This will help us get closer to what we want, and as a bonus we might think of something while reviewing the options.

[User]

- desktop app. let's explore frameworks

- basic calculator

- rust

- minimal

- basic unit tests. desktop app (linux only). production software

- MVP

The model responded pretty comprehensively. I've omitted a lot of details here to keep it short:

[Response]

Rust Desktop GUI Framework Comparison (...table of frameworks, stats, license, paradigm, etc...)

My Recommendation: egui / eframe

Why:

- Fastest path to MVP — immediate mode means you describe UI in pure Rust, no DSL or markup files

- Minimal by default — clean, simple aesthetic out of the box

- Logic easily separable — calculator engine is pure Rust, trivial to unit test

- Lightweight binary — no heavy system dependencies beyond WGPU

- Active community — well-documented, good examples

Runner-up: Iced — if you prefer an Elm architecture (message passing, clearer separation of state/update/view). Slightly more boilerplate but arguably cleaner for production code long-term.

Proposed Plan (egui/eframe)

Milestone 1 — Project Scaffolding

calculator/

├── Cargo.toml

├── src/

│ ├── main.rs # App entry, window setup

│ ├── app.rs # eframe App trait impl (UI)

│ ├── engine.rs # Calculator logic (pure, no UI)

│ └── error.rs # Error types (divide-by-zero, overflow)

└── tests/

└── engine_tests.rs # Unit tests for calculator logic

Milestone 2 — Calculator Engine (engine.rs)

(...details...)

Milestone 3 — UI (app.rs + main.rs)

(...details...)

Milestone 4 — Polish

(...details...)

Questions before we start:

- egui or would you prefer Iced (or another framework)?

- Any keyboard shortcut preferences beyond the obvious?

- Want me to also set up a CI config (GitHub Actions) for running tests on Linux?

The great thing about the "Ask clarifying questions" trick is that the model is more likely to continue asking questions, as we can see at the end of this response.

Planception

The proposed plan is a high-level plan. It covers the goal of the app and it's overall structure. Depending on complexity, it may also have a flowchart showing how data flows through the application. However, this is not enough to proceed with implementation.

What we should do next is:

- Write this plan so we have it for later

- Create a "detailed plan"

[User]

This plan looks good. Please write it to the .plans directory and then wait for further instructions.

We may have multiple "detailed" plans, so we keep this high-level plan around to load into the context of each session. That way the agent can see the overall design along with the detailed section that it's been assigned.

[Response]

Plan written to .plans/calculator-app.md. Ready for your next instructions.

The plan has 4 milestones and we need to split it up into multiple individual implementation phases. Usually the milestones/phases are already split appropriately by the model, but since this app is so small I think we can combine milestone 1 & 2.

The "detailed plan" needs to be created as you go for each phase. You cannot pre-generate all the detailed plans up-front, and you cannot tell an agent autonomously generate the detailed plans either. This is for two reasons:

- The implementation of one phase can have unforeseen effects on later phases depending on the implementation.

- The detailed plan itself might have issues that we want to address.

Here is how to generate a detailed plan:

[User]

Please generate a detailed plan to implement milestone 1 and 2.

- Include the reasoning behind choices we made (if available).

- Include an "acceptance criteria" section.

- Write the detailed plan to

.plans/phase-1-and-2-detailed.md.

After writing the plan, please review it and report any problems with the plan, and then wait for further instructions.

The "detailed" plan should contain some code samples. Depending on model and harness combination, you may get some snippets with "// TODO", or you may get full implementations of large chunks of code. If you don't get any code in the detailed plan, then also add to the prompt:

Include code snippets in the detailed implementation.

Here are my thoughts for why I think each part is needed in the detailed plan:

- Reasoning

- Agents will sometimes pick an easy implementation over a complex one. Like when using a trait for something trivial that could be a free function, the agent will occasionally just remove the trait because it's "over-engineered" (That's from the reasoning trace).

- Acceptance criteria

- This gives the agent something it can check to ensure that the implementation is what we expected. It might write tests or just evaluate what it wrote. If you have very specific acceptance criteria you want to include, then list it as part of the plan generation request. If it's incompatible with the original plan, then we'll find it out soon.

- Code snippets

- We are going to have the model review the plan, so it needs some code snippets to work with.

After a short time:

[Response]

Review — Problems Found

After re-reading the plan, I found 4 issues worth flagging:

1. 🟡 Integration test access (structural issue)

The plan puts tests in tests/engine_tests.rs (integration tests), but the project is a binary crate (src/main.rs). Rust integration tests in tests/ cannot access internal modules of a binary crate — they can only test the binary as a black box via std::process::Command.

Fix: Either:

- (a) Add a src/lib.rs that re-exports engine and error modules (recommended — cleanest separation), or

- (b) Move tests into engine.rs as #[cfg(test)] mod tests { ... } (simpler but puts tests alongside code).

I'd recommend (a) — it aligns with the "engine is an independent, testable library" goal.

2. 🟡 Missing decimal_point() branch

The behavior spec for decimal_point() covers:

- Already has . → no-op

- waiting_for_operand or just_evaluated → set to "0."

But there's no "else" branch for the normal case (e.g., display is "5", user presses .). It should append "." → "5.".

3. 🟡 Backspace on negative numbers

If display is "-5" and user presses ⌫, display becomes "-", which isn't a valid number. The spec should specify: if after removal the display is "-" or empty → reset to "0".

4. 🟢 percent() with pending operation ambiguity

What does 5 + 50 % do? Some calculators interpret this as 5 + (5×0.5) = 7.5, others as 5 + 0.5 = 5.5. The plan's "divide display by 100" gives the latter. This is fine for MVP, but worth documenting as a conscious choice so it's not a surprise during implementation.

Severity summary: Issue #1 is the most important — it affects project structure. Issues #2–3 are spec gaps that would cause bugs during implementation. Issue #4 is just a documentation note.

Want me to amend the plan to fix these, or proceed to implementation as-is?

So even on a calculator at phase 1 we have some issues that need to be addressed. This is why I mentioned previously that we need to perform this step manually instead of letting the agent run free all day. These issues will start as "no big deal" and eventually compound as more accumulate in each phase. Resolving them here prevents the debt accumulation from occurring at all.

In this case I'm fine with what the agent recommended. But many times there will be issues where you actually do need to make a critical decision.

[User]

Apply recommended fixes to the plan, review it again, and then wait for further instruction.

[Response]

Second Review — All Clear

The plan reads correctly with all four fixes applied:

- ✅ src/lib.rs added — exposes engine and error modules for integration tests

- ✅ decimal_point() else branch — appends "." in normal case

- ✅ Backspace on negative numbers — "-" → "0" explicitly handled

- ✅ Percent semantics documented — absolute percentage for MVP, with rationale

I found no remaining issues. The plan is internally consistent, the file structure references match throughout, and all behavior specs cover their edge cases. Ready for your next instructions.

[User]

Proceed with implementation of phase 1.

Context Control

While the agent does it's thing, we need to talk about context lengths. Your agent harness may have decided to spawn a subagent for the implementation, or maybe you handed off the context to another session, or maybe it's running in the same session. Regardless, you'll eventually run into issues with context length.

Luckily for us, the plan is already split up into milestones or implementation phases. We will use those as checkpoints for clearing out the context.

[Response]

Phase 1 is complete. Here's the summary:

(...details about what it did...)

Since we combined phase 1 and 2, we'll just move on to the next phase immediately:

[User]

implement part 2

[Response]

Phase 2 Complete ✅

(... report of the changes...)

Waiting for your next instructions.

Now that phase 1 and 2 are done, we can create a checkpoint that details the current implementation status:

[User]

Please write a progress file at .plans/current-progress.md

[Response]

Progress file written to .plans/current-progress.md. It tracks:

- Phase 1 & 2: ✅ Complete with all acceptance criteria checked off

- Phase 3 & 4: 🔲 Not started

- Deviations from plan documented for both phases

Waiting for your next instructions.

That was easy enough.

Next we need to start phase 3. We will again create a detailed plan, have it reviewed, and then begin implementation. You should create a new empty session for this or, perform a handoff:

[User]

/handoff Read the calculator-app and current-progress files from the .plans directory.

Please generate a detailed plan for phase 3 and write the plan to the .plans directory.

After writing the plan, please review it and report any problems with the plan, and then wait for further instructions.

We don't need to include the extra instructions like the "reasoning" and "acceptance criteria" as before because the agent will be able to figure that out based on whats already in current-progress.md file and the other detailed plan files.

[Response]

The plan is complete and accurate. Let me now provide my review report:

Review Report for Phase 3 Plan

✅ Strengths

(...stuff...)

⚠ Issues / Gaps Found

(...minor issues, nothing to worry about...)

Summary

The plan is solid and implementation-ready. The critical fix is #4 (Shift modifier guard for digit keys to prevent conflict with * and % text events). Issue #1 (error color) and #6 (background color) are minor but should be addressed during implementation. Issues #2, #3, #5 are cosmetic/organizational and will be obvious during coding.

Awaiting further instructions.

Proper planning can take up a lot of context. So if you are running low (35%+ context utilization) after finalizing the detailed plan, you can launch a new session and have the agent load the plan files. This project is small so we'll continue in the same session:

[User]

proceed with implementation

The Grind

We need to repeat the process for each phase of the plan until the feature/project is implemented. To recap, the steps are:

- Start with a high-level plan

- Generate detailed plan (maybe in new session, depending on context availability)

- Review detailed plan

- Implement detailed plan (in new session, usually)

- Update progress file (checkpoint)

- GOTO (1)

Agents will sometimes automatically update the progress file after completing implementation. But if it fails to do so, just remind it to update the progress file after it's done with a phase.

Pro Tips

Here are a few things that can help with this workflow.

Test-Driven-Development and Behavior-Driven-Development

We can help agents work more effectively by using concepts from TDD and BDD.

Specifically, if we include guidelines in an AGENTS.md file to structure tests in a TDD/BDD way, then the agent will have more context on how the project works and should be able to more effectively implement features. This is because the tests will act as regression tests as the agent makes changes. If the agent breaks something, it will read the failing test, see the name and code that gets ran, and be better equipped with knowledge of the codebase for that specific thing it's touching.

Here is a sample of what you could include in your AGENTS.md:

BDD-Style Tests (Given/When/Then)

Structure tests with clear Given/When/Then comments. Each section should contain actual code.

// DO NOT — no BDD structure, inputs not separated from assertion fn preview_resolution_full_res() { let mut config = AppConfig::default(); config.preview_divisor = 1; let res = config.preview_resolution([1920, 1080]); assert_eq!(res, (1920, 1080)); }// DO — tests meaningful behavior with proper BDD structure fn preview_resolution_correctly_calculates_with_divisor_of_one() { // Given a configuration having a preview_divisor of 1. let mut config = AppConfig::default(); config.preview_divisor = 1; // When we calculate the preview resolution. let res = config.preview_resolution([1920, 1080]); // Then the resolution is the same. assert_eq!(res, (1920, 1080)); }

Debtmap

You can use the Debtmap tool to analyze your code. This can help identify locations with high technical debt and then you can have an agent fix it. The tool comes with a "LLM Copy" shortcut that allows you to copy an issue directly to Markdown so an agent can address the problem.

Clippy

We can't forget our good pal Clippy. Since agents write the code now, pedantic should be the only lint level you use. It can be configured in Cargo.toml at the workspace or crate level. Don't forget to allow lints that are being too annoying for your project:

# in a workspace

[workspace.lints.clippy]

pedantic = { level = "warn", priority = -1 }

wildcard_imports = "allow"

# include whatever other lint config you want

# for single crate

[workspace.lints.clippy]

pedantic = { level = "warn", priority = -1 }

wildcard_imports = "allow"

The Calculator

[Response]

Phase 4 (Polish) is complete. Here's a summary of everything implemented:

Phase 4 — Polish ✅ Complete

Steps implemented in order:

(...a giant table of things it did...)

Key implementation fixes applied:

(...issues it ran into and fixed...)

Let's see what it looks like:

I confirmed that it does indeed calculate.

Conclusion

This post showed a method of working with coding agents in a way that allows you to more easily specify requirements for your applications. This is done through having the agent ask clarifying questions. Additionally, detailed plan files are created and reviewed by an agent to find potential problems.

Comments